GlusterFS can be configured to provide persistent storage and dynamic provisioning for OKD. It can be used both containerized within OKD (Containerized GlusterFS) and non-containerized on its own nodes (External GlusterFS). For GlusterFS storage, we will use EC2 Volumes.

In this guide, we will focus on setting up GlusterFS on an existing Openshift 3.10 setup.

If you need to setup an Openshift Origin 3.10 environment, please find relevant instructions here.

Note:

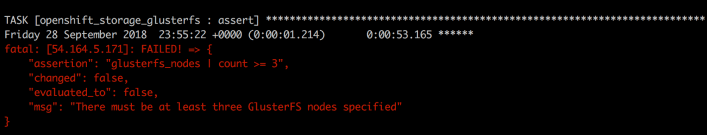

There must be atleast 3 Compute nodes in the Openshift 3.10 setup. If you need to add additional Compute nodes to your cluster, please find the steps for adding additional nodes into the cluster here. Else you will encounter the error below:

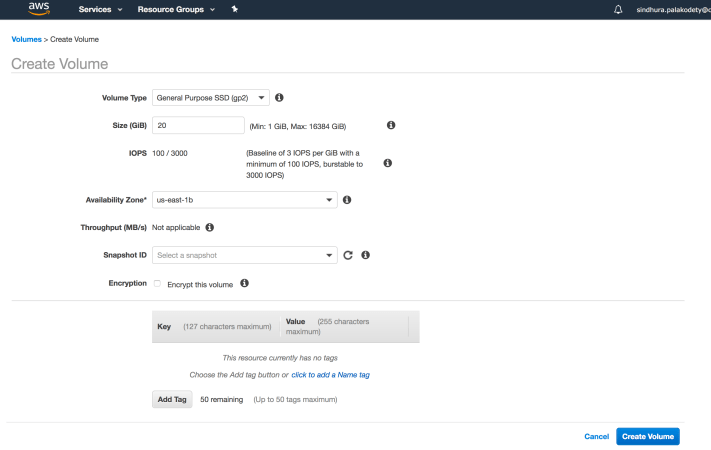

Step1: Create EC2 volumes and attach to Compute Nodes in Openshift Cluster

- Create required number of EC2 volumes using AWS Management Console.

- Attach EC2 volumes to only the Compute Nodes.

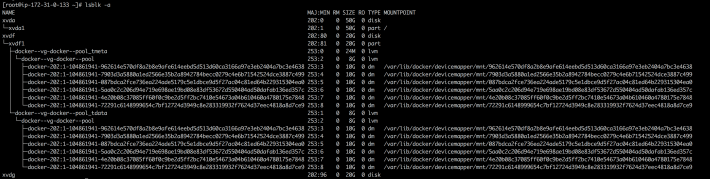

- Verify the output of

lsblkcommand on the Compute Nodes (Notice the additional device xvdg:

Step2: Modify inventory file to add GlusterFS details

Perform the steps below on the Master Node:

- Take a backup of the existing inventory file (/etc/ansible/hosts).

cp /etc/ansible/hosts /etc/ansible/hosts.orig

- Modify the inventory file to add details related to GlusterFS storage as shown below:

vi /etc/ansible/hosts

- Notice the GlusterFS content in blue:

# Create an OSEv3 group that contains the masters, nodes, and etcd groups [OSEv3:children] masters nodes etcd glusterfs # Set variables common for all OSEv3 hosts [OSEv3:vars] openshift_storage_glusterfs_namespace=app-storage openshift_storage_glusterfs_storageclass=true # SSH user, this user should allow ssh based auth without requiring a password ansible_ssh_user=root # Deployment type: origin or openshift-enterprise openshift_deployment_type=origin # resolvable domain (for testing you can use external ip of the master node) openshift_master_default_subdomain=54.164.5.171.nip.io openshift_hosted_manage_registry=true openshift_hosted_manage_router=true openshift_router_selector='node-role.kubernetes.io/infra=true' openshift_registry_selector='node-role.kubernetes.io/infra=true' openshift_master_api_port=443 # external ip of the master node openshift_master_cluster_hostname=54.164.5.171.nip.io # external ip of the master node openshift_master_cluster_public_hostname=54.164.5.171.nip.io openshift_master_console_port=443 openshift_docker_insecure_registries=172.30.0.0/16 openshift_master_identity_providers=[{'name': 'htpasswd_auth', 'login': 'true', 'challenge': 'true', 'kind': 'HTPasswdPasswordIdentityProvider'}] # host group for masters [masters] 54.164.5.171 # host group for etcd [etcd] 54.164.5.171 # host group for nodes, includes region info [nodes] 54.164.5.171 openshift_node_group_name='node-config-master' 52.90.165.132 openshift_node_group_name='node-config-compute' 54.86.70.56 openshift_node_group_name='node-config-compute' 18.212.236.225 openshift_node_group_name='node-config-compute' 18.208.130.47 openshift_node_group_name='node-config-infra' 54.221.121.93 openshift_node_group_name='node-config-infra' [glusterfs] 52.90.165.132 glusterfs_devices='[ "/dev/xvdg" ]' 54.86.70.56 glusterfs_devices='[ "/dev/xvdg" ]' 18.212.236.225 glusterfs_devices='[ "/dev/xvdg" ]'

Step 3: Running Openshift-Ansible Playbook for setting up GlusterFS

Perform the steps below on the Master Node:

For an installation onto an existing OKD cluster:

ansible-playbook -i /etc/ansible/hosts playbooks/openshift-glusterfs/config.yml

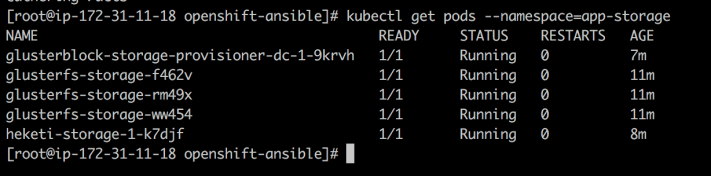

- Once the installation is completed, ensure that the status of the GlusterFS pods is healthy using the command:

kubectl get pods --namespace=app-storage

- Verify GlusterFS Storage Class using the commands:

kubectl get sc kubectl describe sc glusterfs-storage

Disclaimer: All data and information provided on this site is for informational and learning purposes only. cloudliftandshift.com makes no representations as to accuracy, completeness, currentness, suitability, or validity of any information on this site and will not be liable for any errors, issues, or any losses, damages arising from its display or use. This is a personal weblog. The opinions expressed here represent my own and not those of anyone.

Disclaimer: All data and information provided on this site is for informational and learning purposes only. cloudliftandshift.com makes no representations as to accuracy, completeness, currentness, suitability, or validity of any information on this site and will not be liable for any errors, issues, or any losses, damages arising from its display or use. This is a personal weblog. The opinions expressed here represent my own and not those of anyone.